Vector databases for LLM Apps are the key component of modern AI application like chatbots, search engines, and recommendation platforms etc. If you’re building AI applications, understanding vector databases for LLM apps is critical component.

In this guide, we will learn about FAISS, Pinecone DB, ChromaDB , embeddings, and how vector search works behind the scene in real-world Applications.

📌 Table of Contents

- What are vector databases

- What are embeddings

- How vector search works

- FAISS vs Pinecone vs Chroma

- Use cases and best practices

What Are Vector Databases for LLM Apps?

Vector databases for LLM apps store data as numerical vectors instead of traditional rows and columns. It stores the data into the multidimensional array format where data is in numerical. Vector database stores the large data set and find the relevant information with the help of embedding technique.

Why Important?

- Fast similarity search

- Handles unstructured data

- Boost AI applications

- Handles large data store with efficient

🧠 What Are Embeddings?

Embeddings are numerical representations of text, images, documents or any types of structured or unstructured data. Embeddings generate from the words/phrases or from tokens (technical term).

Example:

Text:

“AI is powerful”

Embedding:

[0.21, 0.45, 0.78, 0.67]

Key Points:

- Captures meaning, not just words

- Similar text → similar vectors

- Used in search feature and recommendation

🔍 How Vector Search Works

Vector search finds similar data based on distance between vectors. If vectors are nearest to each other then it will include in the result set. Vector search used the cosine similarity search and Euclidean distance algorithm.

Step-by-Step:

- Convert query into chunks

- Chunks convert it into embedding

- Compare with stored vectors

- Find closest matches

- Return relevant results

Distance Metrics:

- Cosine similarity

- Euclidean distance

🔥 FAISS vs Pinecone vs Chroma

1. FAISS

Best for: Local vector search

- Developed by Meta

- High performance

- Open-source

2. Pinecone

Best for: Managed cloud service

- Fully managed

- Scalable

- Easy integration

3. Chroma

Best for: Lightweight apps

- Developer-friendly

- Simple setup

- Fast searching

- Open source

📊 Tool Comparison (Vector Databases for LLM Apps)

| Tool | Type | Best For | Scalability | Ease of Use |

| FAISS | Local | High performance | Medium | Medium |

| Pinecone | Cloud | Production apps | High | Easy |

| Chroma | Local/Cloud | Small projects | Medium | Easy |

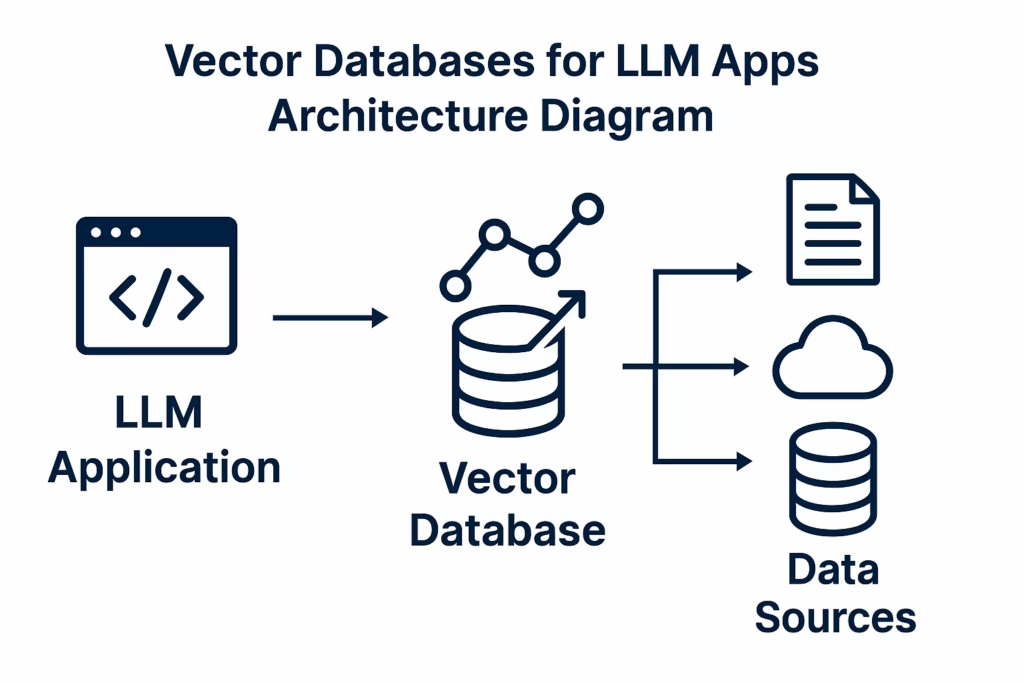

🖼️ Image Suggestion (SEO Optimized)

Alt Text: vector databases for LLM apps architecture diagram

(Add diagram showing embeddings → vector DB → search → results)

💡 Example: Using Vector Database in LLM App

Workflow:

- Upload documents

- Convert to small chunks

- Small chunks convert into embeddings

- Store in vector DB

- Query → retrieve → generate answer

🚀 Use Cases of Vector Databases

- Chatbots with memory

- Semantic search

- Recommendation systems

- Document Q&A

⚡ Benefits

- Fast search capability

- Better relevance

- Scalable AI apps

- Handles large data

- Gives Relevant information

⚠️ Challenges

- Storage cost

- Complexity

- Latency in large datasets (structured or unstructured )

📈 Best Practices

- Use proper embeddings model

- Optimize chunk size

- Choose right database

- Monitor performance

🧠 When to Use Vector Databases for LLM Apps

Use vector databases for LLM apps when:

- Working with large documents

- Building AI search systems

- Creating chatbots with knowledge/memory

- Documents Q/A

- Recommendation feature

✅ Conclusion

Vector databases for LLM apps are essential for building intelligent and scalable AI systems. By using tools like FAISS, Pinecone, and Chroma, developers can create scalable and high-performance applications.

Understanding and implementing embeddings and vector search is the key to unlocking modern AI capabilities.

❓ FAQs

What are vector databases?

Databases that store embeddings for similarity search.

What is embedding?

Numerical representation of data.

Which is best: FAISS or Pinecone?

FAISS for local, Pinecone for cloud apps.

Why use vector search?

To find similar data efficiently.

🔥 Final Thoughts

If you’re building AI apps in 2026, mastering vector databases for LLM apps is a must-have skill. With vector database we can build fast searching capability and recommendation application.🚀.